Generative AI has been a huge talking point in the IT-industry lately and we at NordHero want to showcase what it can do in practice. In the following blog post, we’ll demonstrate how we have used Amazon Bedrock to add an automated process to our website’s CI/CD pipeline that creates a short summary of new blog posts.

First things first

One important question should be asked right away: Why Amazon Bedrock? What are the benefits of Bedrock, and why not to use some other AI service, such as OpenAI’s ChatGPT?

NordHero’s CTO Janne Kuha highlights the reasons:

There are at least two significant matters to point out:

Firstly, Amazon Bedrock is ready for business use with comprehensive data security. Generative AI services need access to your confidential data to be useful. With Amazon Bedrock, your confidential data is encrypted and stored in the AWS Region where you are using Amazon Bedrock. You don’t need to worry about third-party model providers accessing your data. Amazon Bedrock is also in scope for several compliance standards, such as ISO 27001 and PiTuKri - the Finnish public sector cloud security criteria.

Secondly, Amazon Bedrock provides unified API-based access to various Generative AI models. Models are evolving at the speed of light, and new models quickly surpass old ones. Amazon Bedrock makes using different GenAI services very easy, enabling you to change the underlying GenAI model easily as the world of GenAI evolves.

The start of the project

The idea for this project came from a desire to demonstrate how generative AI can be practically used in the real world. We saw the blog posts on our website as the perfect example on how you can use Amazon Bedrock to take advantage of constantly improving machine learning models. Let’s face it, AI is here to stay.

We started the project by defining our use case and diving into the documentation of Amazon Bedrock. The objective was set to be that when one of our hero makers writes a new blog post, an automatic process will generate a 150 word summary of the post, build the page with the summary and deploy the build. All without needing to manually tinker with any part of the summary process.

Generative AI by code made simple with Amazon Bedrock

Let’s start with the the web site deployment process. Our site utilizes AWS Amplify Web Hosting functionality, and the site is automatically deployed by Amplify’s build process from a specific branch in a GitHub repository. We use GitHub Actions and AWS CDK to handle the building and deployment of the website’s infrastructure, and we also trigger the Amplify build process from GH Actions.

There were several options for a point in our CI/CD pipeline to add the summarizing step. In our case, a good option was to use a GitHub Actions workflow to

- check out the GitHub branch

- investigate whether there are new or changed blog posts that need summarizing

- invoke the Bedrock API with each incoming blog post one by one, and store the summaries as new text files

- push the generated summary files back in the repository branch

- trigger Amplify’s build process with the branch that contains the new AI generated content.

Here is the related GitHub Action job:

...

amplify_build:

if: ${{ !contains(github.event.head_commit.message, '[skip-build]') }}

runs-on: ubuntu-latest

steps:

- name: Checkout

uses: actions/checkout@v1

- name: Configure AWS Credentials

uses: aws-actions/configure-aws-credentials@v4

with:

aws-region: <AWS Region>

role-to-assume: <IAM Role ARN>

- name: Generate post summaries

run: |

cd www/content/en/posts

pip3 install boto3

git diff --diff-filter=AM origin/production --name-only -- . :^bedrock-summaries \

| xargs -n 1 basename > newFiles.txt

python3 genSummary.py

rm newFiles.txt

git config --global user.name 'GitHub Actions'

git config --global user.email '<>'

git remote set-url origin \

https://x-access-token:${{ secrets.OAUTH_TOKEN }}@github.com/

${{ github.repository }}

git add .

git commit -m "Generated AI summaries [skip-build]"

git push origin HEAD: ${{ github.ref }}

- name: Deploy Amplify

working-directory: .github/scripts

run: ./amplify-deploy.sh ${{ vars.AMPLIFY_APP_ID }} ${{ github.ref_name }}

...

The API call to Amazon Bedrock turned out to be one of the easiest parts to actually code and implement. The range of different AI models on eu-central-1 region was still a bit limited compared to the options available on us-east-1, but for text generation we still had good options. After testing the different options with our use case, we chose Anthropic Claude v2.1 text model. Then we made a simple Python script (genSummary.py in the previous GH Action) to handle the API call, using boto3. You can see the relevant part of the script below.

...

# Specifying the service and region

brt = boto3.client(service_name='bedrock-runtime', region_name='eu-central-1')

# Variables for the Bedrock API call

body = json.dumps({

"prompt": "Write a 150 word summary of the following blog post: " + blogPostContent,

"max_tokens_to_sample": 300,

"temperature": 1,

"top_p": 0.99,

"top_k": 250

})

modelId = 'anthropic.claude-v2:1'

accept = 'application/json'

contentType = 'application/json'

# Invoke the Bedrock model with the variables above and save the response body

response = brt.invoke_model(body=body, modelId=modelId, accept=accept, contentType=contentType)

responseBody = json.loads(response.get('body').read())

...

As you can see, the API call is pretty simple and doesn’t require anything else than some variables in JSON. In the API call body, you can change the parameters that the test model uses. This allows you to fine tune the answers given by the model if, for an example you notice the answers given by the model aren’t quite right.

Note: Easiest way to find out the Amazon Bedrock API call formats, and play around with the different parameters, is to try it your self with Amazon Bedrock’s Playground feature available in AWS Console.

After the script above is used to generate a summary of a blog post, we simply build and deploy our website with the latest content using AWS Amplify. In the end, the whole summary process was done using code and nothing had to be configured from the AWS console, making the feature easily repeatable in different environments.

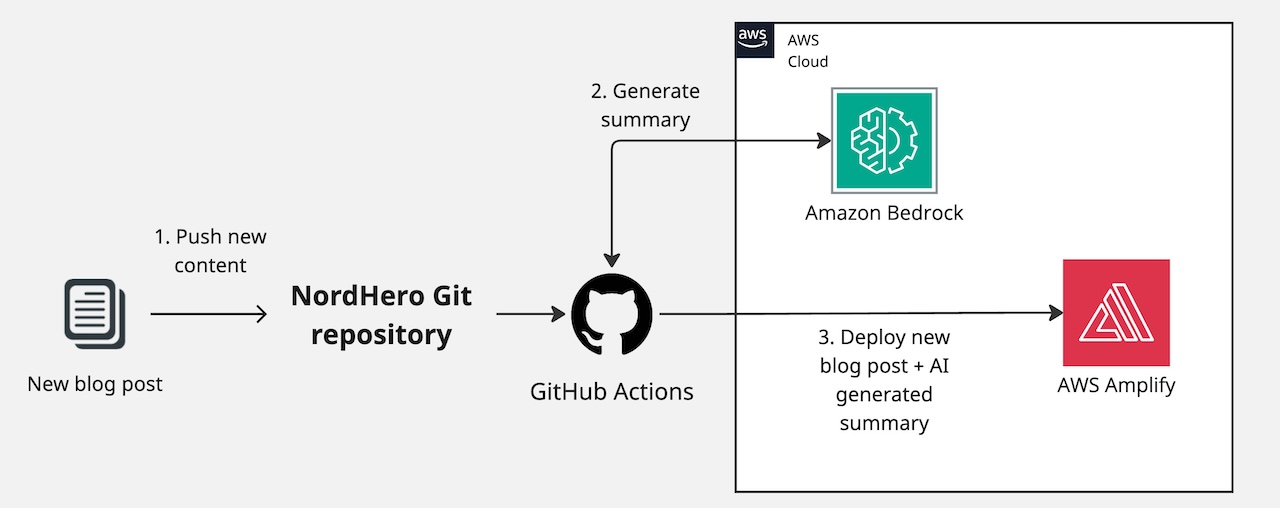

I’ve drawn up a simple flowchart of our pipeline with the new generative AI feature:

The final results and thoughts

Not long after starting the project we were able to implement the first version onto our site. The Claude v2.1 text model efficiently generates a short summary of a given blog post and so far it has produced very coherent text that can be published as is. In the end, we’re very happy with what we’ve achieved here.

This project has been a great exercise in the real world application of generative AI technology. What I took away from this, as personally working with Amazon Bedrock for the first time, was how simple and effective Amazon Bedrock was to use. As a developer, I just had to request access to the models on my AWS account and then I could use the models in my code. It took around 15 minutes to get access to all the models and it could be done straight from the AWS Console. Currently, here in the EU we have just Anthropic Claude and Amazon Titan models available for text processing, but I hope more are on the way. Of course if you want to try the other models, you can do so by switching your region across the Atlantic to the USA.

–

As a last note, we’d like to offer our professional services to all who are interested in implementing AI in their own projects or workflows. So if you’re looking to take advantage of the latest AI technology, please contact us here at NordHero!